mirror of

https://gitlab.com/etke.cc/emm.git

synced 2025-07-09 11:36:11 +00:00

update deps

This commit is contained in:

parent

c083a899e9

commit

de0ee6222a

17

go.mod

17

go.mod

@ -2,10 +2,21 @@ module gitlab.com/etke.cc/tools/emm

|

||||

|

||||

go 1.17

|

||||

|

||||

require maunium.net/go/mautrix v0.10.9

|

||||

require maunium.net/go/mautrix v0.16.2

|

||||

|

||||

require (

|

||||

github.com/btcsuite/btcutil v1.0.2 // indirect

|

||||

golang.org/x/crypto v0.0.0-20211215153901-e495a2d5b3d3 // indirect

|

||||

golang.org/x/net v0.0.0-20211216030914-fe4d6282115f // indirect

|

||||

github.com/mattn/go-colorable v0.1.13 // indirect

|

||||

github.com/mattn/go-isatty v0.0.20 // indirect

|

||||

github.com/rs/zerolog v1.31.0 // indirect

|

||||

github.com/tidwall/gjson v1.17.0 // indirect

|

||||

github.com/tidwall/match v1.1.1 // indirect

|

||||

github.com/tidwall/pretty v1.2.1 // indirect

|

||||

github.com/tidwall/sjson v1.2.5 // indirect

|

||||

go.mau.fi/util v0.2.1 // indirect

|

||||

golang.org/x/crypto v0.16.0 // indirect

|

||||

golang.org/x/exp v0.0.0-20231127185646-65229373498e // indirect

|

||||

golang.org/x/net v0.19.0 // indirect

|

||||

golang.org/x/sys v0.15.0 // indirect

|

||||

maunium.net/go/maulogger/v2 v2.4.1 // indirect

|

||||

)

|

||||

|

||||

37

go.sum

37

go.sum

@ -9,10 +9,12 @@ github.com/btcsuite/goleveldb v0.0.0-20160330041536-7834afc9e8cd/go.mod h1:F+uVa

|

||||

github.com/btcsuite/snappy-go v0.0.0-20151229074030-0bdef8d06723/go.mod h1:8woku9dyThutzjeg+3xrA5iCpBRH8XEEg3lh6TiUghc=

|

||||

github.com/btcsuite/websocket v0.0.0-20150119174127-31079b680792/go.mod h1:ghJtEyQwv5/p4Mg4C0fgbePVuGr935/5ddU9Z3TmDRY=

|

||||

github.com/btcsuite/winsvc v1.0.0/go.mod h1:jsenWakMcC0zFBFurPLEAyrnc/teJEM1O46fmI40EZs=

|

||||

github.com/coreos/go-systemd/v22 v22.5.0/go.mod h1:Y58oyj3AT4RCenI/lSvhwexgC+NSVTIJ3seZv2GcEnc=

|

||||

github.com/davecgh/go-spew v0.0.0-20171005155431-ecdeabc65495/go.mod h1:J7Y8YcW2NihsgmVo/mv3lAwl/skON4iLHjSsI+c5H38=

|

||||

github.com/davecgh/go-spew v1.1.0 h1:ZDRjVQ15GmhC3fiQ8ni8+OwkZQO4DARzQgrnXU1Liz8=

|

||||

github.com/davecgh/go-spew v1.1.0/go.mod h1:J7Y8YcW2NihsgmVo/mv3lAwl/skON4iLHjSsI+c5H38=

|

||||

github.com/fsnotify/fsnotify v1.4.7/go.mod h1:jwhsz4b93w/PPRr/qN1Yymfu8t87LnFCMoQvtojpjFo=

|

||||

github.com/godbus/dbus/v5 v5.0.4/go.mod h1:xhWf0FNVPg57R7Z0UbKHbJfkEywrmjJnf7w5xrFpKfA=

|

||||

github.com/golang/protobuf v1.2.0/go.mod h1:6lQm79b+lXiMfvg/cZm0SGofjICqVBUtrP5yJMmIC1U=

|

||||

github.com/gorilla/mux v1.8.0/go.mod h1:DVbg23sWSpFRCP0SfiEN6jmj59UnW/n46BH5rLB71So=

|

||||

github.com/gorilla/websocket v1.4.2/go.mod h1:YR8l580nyteQvAITg2hZ9XVh4b55+EU/adAjf1fMHhE=

|

||||

@ -20,30 +22,56 @@ github.com/hpcloud/tail v1.0.0/go.mod h1:ab1qPbhIpdTxEkNHXyeSf5vhxWSCs/tWer42PpO

|

||||

github.com/jessevdk/go-flags v0.0.0-20141203071132-1679536dcc89/go.mod h1:4FA24M0QyGHXBuZZK/XkWh8h0e1EYbRYJSGM75WSRxI=

|

||||

github.com/jrick/logrotate v1.0.0/go.mod h1:LNinyqDIJnpAur+b8yyulnQw/wDuN1+BYKlTRt3OuAQ=

|

||||

github.com/kkdai/bstream v0.0.0-20161212061736-f391b8402d23/go.mod h1:J+Gs4SYgM6CZQHDETBtE9HaSEkGmuNXF86RwHhHUvq4=

|

||||

github.com/mattn/go-colorable v0.1.13 h1:fFA4WZxdEF4tXPZVKMLwD8oUnCTTo08duU7wxecdEvA=

|

||||

github.com/mattn/go-colorable v0.1.13/go.mod h1:7S9/ev0klgBDR4GtXTXX8a3vIGJpMovkB8vQcUbaXHg=

|

||||

github.com/mattn/go-isatty v0.0.16/go.mod h1:kYGgaQfpe5nmfYZH+SKPsOc2e4SrIfOl2e/yFXSvRLM=

|

||||

github.com/mattn/go-isatty v0.0.19/go.mod h1:W+V8PltTTMOvKvAeJH7IuucS94S2C6jfK/D7dTCTo3Y=

|

||||

github.com/mattn/go-isatty v0.0.20 h1:xfD0iDuEKnDkl03q4limB+vH+GxLEtL/jb4xVJSWWEY=

|

||||

github.com/mattn/go-isatty v0.0.20/go.mod h1:W+V8PltTTMOvKvAeJH7IuucS94S2C6jfK/D7dTCTo3Y=

|

||||

github.com/mattn/go-sqlite3 v1.14.10/go.mod h1:NyWgC/yNuGj7Q9rpYnZvas74GogHl5/Z4A/KQRfk6bU=

|

||||

github.com/onsi/ginkgo v1.6.0/go.mod h1:lLunBs/Ym6LB5Z9jYTR76FiuTmxDTDusOGeTQH+WWjE=

|

||||

github.com/onsi/ginkgo v1.7.0/go.mod h1:lLunBs/Ym6LB5Z9jYTR76FiuTmxDTDusOGeTQH+WWjE=

|

||||

github.com/onsi/gomega v1.4.3/go.mod h1:ex+gbHU/CVuBBDIJjb2X0qEXbFg53c61hWP/1CpauHY=

|

||||

github.com/pkg/errors v0.9.1/go.mod h1:bwawxfHBFNV+L2hUp1rHADufV3IMtnDRdf1r5NINEl0=

|

||||

github.com/pmezard/go-difflib v1.0.0 h1:4DBwDE0NGyQoBHbLQYPwSUPoCMWR5BEzIk/f1lZbAQM=

|

||||

github.com/pmezard/go-difflib v1.0.0/go.mod h1:iKH77koFhYxTK1pcRnkKkqfTogsbg7gZNVY4sRDYZ/4=

|

||||

github.com/rs/xid v1.5.0/go.mod h1:trrq9SKmegXys3aeAKXMUTdJsYXVwGY3RLcfgqegfbg=

|

||||

github.com/rs/zerolog v1.31.0 h1:FcTR3NnLWW+NnTwwhFWiJSZr4ECLpqCm6QsEnyvbV4A=

|

||||

github.com/rs/zerolog v1.31.0/go.mod h1:/7mN4D5sKwJLZQ2b/znpjC3/GQWY/xaDXUM0kKWRHss=

|

||||

github.com/russross/blackfriday/v2 v2.1.0/go.mod h1:+Rmxgy9KzJVeS9/2gXHxylqXiyQDYRxCVz55jmeOWTM=

|

||||

github.com/stretchr/objx v0.1.0/go.mod h1:HFkY916IF+rwdDfMAkV7OtwuqBVzrE8GR6GFx+wExME=

|

||||

github.com/stretchr/testify v1.7.0 h1:nwc3DEeHmmLAfoZucVR881uASk0Mfjw8xYJ99tb5CcY=

|

||||

github.com/stretchr/testify v1.7.0/go.mod h1:6Fq8oRcR53rry900zMqJjRRixrwX3KX962/h/Wwjteg=

|

||||

github.com/tidwall/gjson v1.10.2/go.mod h1:/wbyibRr2FHMks5tjHJ5F8dMZh3AcwJEMf5vlfC0lxk=

|

||||

github.com/tidwall/gjson v1.14.2/go.mod h1:/wbyibRr2FHMks5tjHJ5F8dMZh3AcwJEMf5vlfC0lxk=

|

||||

github.com/tidwall/gjson v1.17.0 h1:/Jocvlh98kcTfpN2+JzGQWQcqrPQwDrVEMApx/M5ZwM=

|

||||

github.com/tidwall/gjson v1.17.0/go.mod h1:/wbyibRr2FHMks5tjHJ5F8dMZh3AcwJEMf5vlfC0lxk=

|

||||

github.com/tidwall/match v1.1.1 h1:+Ho715JplO36QYgwN9PGYNhgZvoUSc9X2c80KVTi+GA=

|

||||

github.com/tidwall/match v1.1.1/go.mod h1:eRSPERbgtNPcGhD8UCthc6PmLEQXEWd3PRB5JTxsfmM=

|

||||

github.com/tidwall/pretty v1.2.0/go.mod h1:ITEVvHYasfjBbM0u2Pg8T2nJnzm8xPwvNhhsoaGGjNU=

|

||||

github.com/tidwall/pretty v1.2.1 h1:qjsOFOWWQl+N3RsoF5/ssm1pHmJJwhjlSbZ51I6wMl4=

|

||||

github.com/tidwall/pretty v1.2.1/go.mod h1:ITEVvHYasfjBbM0u2Pg8T2nJnzm8xPwvNhhsoaGGjNU=

|

||||

github.com/tidwall/sjson v1.2.3/go.mod h1:5WdjKx3AQMvCJ4RG6/2UYT7dLrGvJUV1x4jdTAyGvZs=

|

||||

github.com/tidwall/sjson v1.2.5 h1:kLy8mja+1c9jlljvWTlSazM7cKDRfJuR/bOJhcY5NcY=

|

||||

github.com/tidwall/sjson v1.2.5/go.mod h1:Fvgq9kS/6ociJEDnK0Fk1cpYF4FIW6ZF7LAe+6jwd28=

|

||||

go.mau.fi/util v0.2.1 h1:eazulhFE/UmjOFtPrGg6zkF5YfAyiDzQb8ihLMbsPWw=

|

||||

go.mau.fi/util v0.2.1/go.mod h1:MjlzCQEMzJ+G8RsPawHzpLB8rwTo3aPIjG5FzBvQT/c=

|

||||

golang.org/x/crypto v0.0.0-20170930174604-9419663f5a44/go.mod h1:6SG95UA2DQfeDnfUPMdvaQW0Q7yPrPDi9nlGo2tz2b4=

|

||||

golang.org/x/crypto v0.0.0-20190308221718-c2843e01d9a2/go.mod h1:djNgcEr1/C05ACkg1iLfiJU5Ep61QUkGW8qpdssI0+w=

|

||||

golang.org/x/crypto v0.0.0-20200115085410-6d4e4cb37c7d/go.mod h1:LzIPMQfyMNhhGPhUkYOs5KpL4U8rLKemX1yGLhDgUto=

|

||||

golang.org/x/crypto v0.0.0-20211215153901-e495a2d5b3d3 h1:0es+/5331RGQPcXlMfP+WrnIIS6dNnNRe0WB02W0F4M=

|

||||

golang.org/x/crypto v0.0.0-20211215153901-e495a2d5b3d3/go.mod h1:IxCIyHEi3zRg3s0A5j5BB6A9Jmi73HwBIUl50j+osU4=

|

||||

golang.org/x/crypto v0.16.0 h1:mMMrFzRSCF0GvB7Ne27XVtVAaXLrPmgPC7/v0tkwHaY=

|

||||

golang.org/x/crypto v0.16.0/go.mod h1:gCAAfMLgwOJRpTjQ2zCCt2OcSfYMTeZVSRtQlPC7Nq4=

|

||||

golang.org/x/exp v0.0.0-20231127185646-65229373498e h1:Gvh4YaCaXNs6dKTlfgismwWZKyjVZXwOPfIyUaqU3No=

|

||||

golang.org/x/exp v0.0.0-20231127185646-65229373498e/go.mod h1:iRJReGqOEeBhDZGkGbynYwcHlctCvnjTYIamk7uXpHI=

|

||||

golang.org/x/net v0.0.0-20180906233101-161cd47e91fd/go.mod h1:mL1N/T3taQHkDXs73rZJwtUhF3w3ftmwwsq0BUmARs4=

|

||||

golang.org/x/net v0.0.0-20190404232315-eb5bcb51f2a3/go.mod h1:t9HGtf8HONx5eT2rtn7q6eTqICYqUVnKs3thJo3Qplg=

|

||||

golang.org/x/net v0.0.0-20211112202133-69e39bad7dc2/go.mod h1:9nx3DQGgdP8bBQD5qxJ1jj9UTztislL4KSBs9R2vV5Y=

|

||||

golang.org/x/net v0.0.0-20211216030914-fe4d6282115f h1:hEYJvxw1lSnWIl8X9ofsYMklzaDs90JI2az5YMd4fPM=

|

||||

golang.org/x/net v0.0.0-20211216030914-fe4d6282115f/go.mod h1:9nx3DQGgdP8bBQD5qxJ1jj9UTztislL4KSBs9R2vV5Y=

|

||||

golang.org/x/net v0.19.0 h1:zTwKpTd2XuCqf8huc7Fo2iSy+4RHPd10s4KzeTnVr1c=

|

||||

golang.org/x/net v0.19.0/go.mod h1:CfAk/cbD4CthTvqiEl8NpboMuiuOYsAr/7NOjZJtv1U=

|

||||

golang.org/x/sync v0.0.0-20180314180146-1d60e4601c6f/go.mod h1:RxMgew5VJxzue5/jJTE5uejpjVlOe/izrB70Jof72aM=

|

||||

golang.org/x/sys v0.0.0-20180909124046-d0be0721c37e/go.mod h1:STP8DvDyc/dI5b8T5hshtkjS+E42TnysNCUPdjciGhY=

|

||||

golang.org/x/sys v0.0.0-20190215142949-d0b11bdaac8a/go.mod h1:STP8DvDyc/dI5b8T5hshtkjS+E42TnysNCUPdjciGhY=

|

||||

@ -51,6 +79,11 @@ golang.org/x/sys v0.0.0-20190412213103-97732733099d/go.mod h1:h1NjWce9XRLGQEsW7w

|

||||

golang.org/x/sys v0.0.0-20201119102817-f84b799fce68/go.mod h1:h1NjWce9XRLGQEsW7wpKNCjG9DtNlClVuFLEZdDNbEs=

|

||||

golang.org/x/sys v0.0.0-20210423082822-04245dca01da/go.mod h1:h1NjWce9XRLGQEsW7wpKNCjG9DtNlClVuFLEZdDNbEs=

|

||||

golang.org/x/sys v0.0.0-20210615035016-665e8c7367d1/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

|

||||

golang.org/x/sys v0.0.0-20220811171246-fbc7d0a398ab/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

|

||||

golang.org/x/sys v0.6.0/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

|

||||

golang.org/x/sys v0.12.0/go.mod h1:oPkhp1MJrh7nUepCBck5+mAzfO9JrbApNNgaTdGDITg=

|

||||

golang.org/x/sys v0.15.0 h1:h48lPFYpsTvQJZF4EKyI4aLHaev3CxivZmv7yZig9pc=

|

||||

golang.org/x/sys v0.15.0/go.mod h1:/VUhepiaJMQUp4+oa/7Zr1D23ma6VTLIYjOOTFZPUcA=

|

||||

golang.org/x/term v0.0.0-20201126162022-7de9c90e9dd1/go.mod h1:bj7SfCRtBDWHUb9snDiAeCFNEtKQo2Wmx5Cou7ajbmo=

|

||||

golang.org/x/text v0.3.0/go.mod h1:NqM8EUOU14njkJ3fqMW+pc6Ldnwhi/IjpwHt7yyuwOQ=

|

||||

golang.org/x/text v0.3.6/go.mod h1:5Zoc/QRtKVWzQhOtBMvqHzDpF6irO9z98xDceosuGiQ=

|

||||

@ -63,5 +96,9 @@ gopkg.in/yaml.v2 v2.4.0/go.mod h1:RDklbk79AGWmwhnvt/jBztapEOGDOx6ZbXqjP6csGnQ=

|

||||

gopkg.in/yaml.v3 v3.0.0-20200313102051-9f266ea9e77c h1:dUUwHk2QECo/6vqA44rthZ8ie2QXMNeKRTHCNY2nXvo=

|

||||

gopkg.in/yaml.v3 v3.0.0-20200313102051-9f266ea9e77c/go.mod h1:K4uyk7z7BCEPqu6E+C64Yfv1cQ7kz7rIZviUmN+EgEM=

|

||||

maunium.net/go/maulogger/v2 v2.3.2/go.mod h1:TYWy7wKwz/tIXTpsx8G3mZseIRiC5DoMxSZazOHy68A=

|

||||

maunium.net/go/maulogger/v2 v2.4.1 h1:N7zSdd0mZkB2m2JtFUsiGTQQAdP0YeFWT7YMc80yAL8=

|

||||

maunium.net/go/maulogger/v2 v2.4.1/go.mod h1:omPuYwYBILeVQobz8uO3XC8DIRuEb5rXYlQSuqrbCho=

|

||||

maunium.net/go/mautrix v0.10.9 h1:Xb2lBpjSoMazsSlvsDEqJnuHZDJpYpxwza2N0w60UV0=

|

||||

maunium.net/go/mautrix v0.10.9/go.mod h1:4XljZZGZiIlpfbQ+Tt2ykjapskJ8a7Z2i9y/+YaceF8=

|

||||

maunium.net/go/mautrix v0.16.2 h1:a6GUJXNWsTEOO8VE4dROBfCIfPp50mqaqzv7KPzChvg=

|

||||

maunium.net/go/mautrix v0.16.2/go.mod h1:YL4l4rZB46/vj/ifRMEjcibbvHjgxHftOF1SgmruLu4=

|

||||

|

||||

5

justfile

5

justfile

@ -3,9 +3,8 @@ default:

|

||||

@just --list --justfile {{ justfile() }}

|

||||

|

||||

# update go deps

|

||||

update:

|

||||

go get ./cmd

|

||||

go mod tidy

|

||||

update *flags:

|

||||

go get {{flags}} ./cmd

|

||||

go mod vendor

|

||||

|

||||

# run linter

|

||||

|

||||

16

vendor/github.com/btcsuite/btcutil/LICENSE

generated

vendored

16

vendor/github.com/btcsuite/btcutil/LICENSE

generated

vendored

@ -1,16 +0,0 @@

|

||||

ISC License

|

||||

|

||||

Copyright (c) 2013-2017 The btcsuite developers

|

||||

Copyright (c) 2016-2017 The Lightning Network Developers

|

||||

|

||||

Permission to use, copy, modify, and distribute this software for any

|

||||

purpose with or without fee is hereby granted, provided that the above

|

||||

copyright notice and this permission notice appear in all copies.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS" AND THE AUTHOR DISCLAIMS ALL WARRANTIES

|

||||

WITH REGARD TO THIS SOFTWARE INCLUDING ALL IMPLIED WARRANTIES OF

|

||||

MERCHANTABILITY AND FITNESS. IN NO EVENT SHALL THE AUTHOR BE LIABLE FOR

|

||||

ANY SPECIAL, DIRECT, INDIRECT, OR CONSEQUENTIAL DAMAGES OR ANY DAMAGES

|

||||

WHATSOEVER RESULTING FROM LOSS OF USE, DATA OR PROFITS, WHETHER IN AN

|

||||

ACTION OF CONTRACT, NEGLIGENCE OR OTHER TORTIOUS ACTION, ARISING OUT OF

|

||||

OR IN CONNECTION WITH THE USE OR PERFORMANCE OF THIS SOFTWARE.

|

||||

34

vendor/github.com/btcsuite/btcutil/base58/README.md

generated

vendored

34

vendor/github.com/btcsuite/btcutil/base58/README.md

generated

vendored

@ -1,34 +0,0 @@

|

||||

base58

|

||||

==========

|

||||

|

||||

[](https://travis-ci.org/btcsuite/btcutil)

|

||||

[](http://copyfree.org)

|

||||

[](http://godoc.org/github.com/btcsuite/btcutil/base58)

|

||||

|

||||

Package base58 provides an API for encoding and decoding to and from the

|

||||

modified base58 encoding. It also provides an API to do Base58Check encoding,

|

||||

as described [here](https://en.bitcoin.it/wiki/Base58Check_encoding).

|

||||

|

||||

A comprehensive suite of tests is provided to ensure proper functionality.

|

||||

|

||||

## Installation and Updating

|

||||

|

||||

```bash

|

||||

$ go get -u github.com/btcsuite/btcutil/base58

|

||||

```

|

||||

|

||||

## Examples

|

||||

|

||||

* [Decode Example](http://godoc.org/github.com/btcsuite/btcutil/base58#example-Decode)

|

||||

Demonstrates how to decode modified base58 encoded data.

|

||||

* [Encode Example](http://godoc.org/github.com/btcsuite/btcutil/base58#example-Encode)

|

||||

Demonstrates how to encode data using the modified base58 encoding scheme.

|

||||

* [CheckDecode Example](http://godoc.org/github.com/btcsuite/btcutil/base58#example-CheckDecode)

|

||||

Demonstrates how to decode Base58Check encoded data.

|

||||

* [CheckEncode Example](http://godoc.org/github.com/btcsuite/btcutil/base58#example-CheckEncode)

|

||||

Demonstrates how to encode data using the Base58Check encoding scheme.

|

||||

|

||||

## License

|

||||

|

||||

Package base58 is licensed under the [copyfree](http://copyfree.org) ISC

|

||||

License.

|

||||

75

vendor/github.com/btcsuite/btcutil/base58/base58.go

generated

vendored

75

vendor/github.com/btcsuite/btcutil/base58/base58.go

generated

vendored

@ -1,75 +0,0 @@

|

||||

// Copyright (c) 2013-2015 The btcsuite developers

|

||||

// Use of this source code is governed by an ISC

|

||||

// license that can be found in the LICENSE file.

|

||||

|

||||

package base58

|

||||

|

||||

import (

|

||||

"math/big"

|

||||

)

|

||||

|

||||

//go:generate go run genalphabet.go

|

||||

|

||||

var bigRadix = big.NewInt(58)

|

||||

var bigZero = big.NewInt(0)

|

||||

|

||||

// Decode decodes a modified base58 string to a byte slice.

|

||||

func Decode(b string) []byte {

|

||||

answer := big.NewInt(0)

|

||||

j := big.NewInt(1)

|

||||

|

||||

scratch := new(big.Int)

|

||||

for i := len(b) - 1; i >= 0; i-- {

|

||||

tmp := b58[b[i]]

|

||||

if tmp == 255 {

|

||||

return []byte("")

|

||||

}

|

||||

scratch.SetInt64(int64(tmp))

|

||||

scratch.Mul(j, scratch)

|

||||

answer.Add(answer, scratch)

|

||||

j.Mul(j, bigRadix)

|

||||

}

|

||||

|

||||

tmpval := answer.Bytes()

|

||||

|

||||

var numZeros int

|

||||

for numZeros = 0; numZeros < len(b); numZeros++ {

|

||||

if b[numZeros] != alphabetIdx0 {

|

||||

break

|

||||

}

|

||||

}

|

||||

flen := numZeros + len(tmpval)

|

||||

val := make([]byte, flen)

|

||||

copy(val[numZeros:], tmpval)

|

||||

|

||||

return val

|

||||

}

|

||||

|

||||

// Encode encodes a byte slice to a modified base58 string.

|

||||

func Encode(b []byte) string {

|

||||

x := new(big.Int)

|

||||

x.SetBytes(b)

|

||||

|

||||

answer := make([]byte, 0, len(b)*136/100)

|

||||

for x.Cmp(bigZero) > 0 {

|

||||

mod := new(big.Int)

|

||||

x.DivMod(x, bigRadix, mod)

|

||||

answer = append(answer, alphabet[mod.Int64()])

|

||||

}

|

||||

|

||||

// leading zero bytes

|

||||

for _, i := range b {

|

||||

if i != 0 {

|

||||

break

|

||||

}

|

||||

answer = append(answer, alphabetIdx0)

|

||||

}

|

||||

|

||||

// reverse

|

||||

alen := len(answer)

|

||||

for i := 0; i < alen/2; i++ {

|

||||

answer[i], answer[alen-1-i] = answer[alen-1-i], answer[i]

|

||||

}

|

||||

|

||||

return string(answer)

|

||||

}

|

||||

17

vendor/github.com/btcsuite/btcutil/base58/cov_report.sh

generated

vendored

17

vendor/github.com/btcsuite/btcutil/base58/cov_report.sh

generated

vendored

@ -1,17 +0,0 @@

|

||||

#!/bin/sh

|

||||

|

||||

# This script uses gocov to generate a test coverage report.

|

||||

# The gocov tool my be obtained with the following command:

|

||||

# go get github.com/axw/gocov/gocov

|

||||

#

|

||||

# It will be installed to $GOPATH/bin, so ensure that location is in your $PATH.

|

||||

|

||||

# Check for gocov.

|

||||

type gocov >/dev/null 2>&1

|

||||

if [ $? -ne 0 ]; then

|

||||

echo >&2 "This script requires the gocov tool."

|

||||

echo >&2 "You may obtain it with the following command:"

|

||||

echo >&2 "go get github.com/axw/gocov/gocov"

|

||||

exit 1

|

||||

fi

|

||||

gocov test | gocov report

|

||||

21

vendor/github.com/mattn/go-colorable/LICENSE

generated

vendored

Normal file

21

vendor/github.com/mattn/go-colorable/LICENSE

generated

vendored

Normal file

@ -0,0 +1,21 @@

|

||||

The MIT License (MIT)

|

||||

|

||||

Copyright (c) 2016 Yasuhiro Matsumoto

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

in the Software without restriction, including without limitation the rights

|

||||

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE.

|

||||

48

vendor/github.com/mattn/go-colorable/README.md

generated

vendored

Normal file

48

vendor/github.com/mattn/go-colorable/README.md

generated

vendored

Normal file

@ -0,0 +1,48 @@

|

||||

# go-colorable

|

||||

|

||||

[](https://github.com/mattn/go-colorable/actions?query=workflow%3Atest)

|

||||

[](https://codecov.io/gh/mattn/go-colorable)

|

||||

[](http://godoc.org/github.com/mattn/go-colorable)

|

||||

[](https://goreportcard.com/report/mattn/go-colorable)

|

||||

|

||||

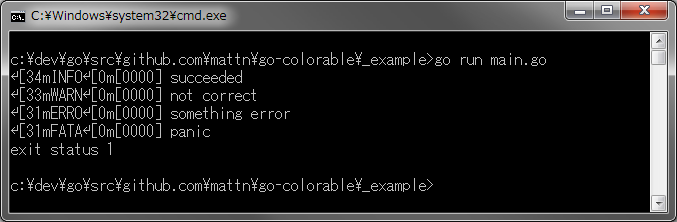

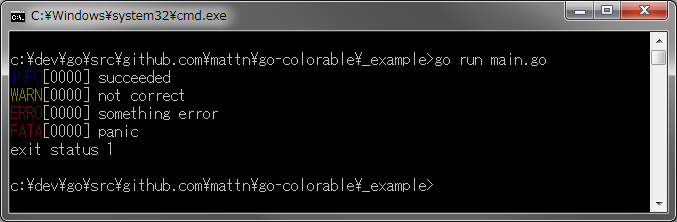

Colorable writer for windows.

|

||||

|

||||

For example, most of logger packages doesn't show colors on windows. (I know we can do it with ansicon. But I don't want.)

|

||||

This package is possible to handle escape sequence for ansi color on windows.

|

||||

|

||||

## Too Bad!

|

||||

|

||||

|

||||

|

||||

|

||||

## So Good!

|

||||

|

||||

|

||||

|

||||

## Usage

|

||||

|

||||

```go

|

||||

logrus.SetFormatter(&logrus.TextFormatter{ForceColors: true})

|

||||

logrus.SetOutput(colorable.NewColorableStdout())

|

||||

|

||||

logrus.Info("succeeded")

|

||||

logrus.Warn("not correct")

|

||||

logrus.Error("something error")

|

||||

logrus.Fatal("panic")

|

||||

```

|

||||

|

||||

You can compile above code on non-windows OSs.

|

||||

|

||||

## Installation

|

||||

|

||||

```

|

||||

$ go get github.com/mattn/go-colorable

|

||||

```

|

||||

|

||||

# License

|

||||

|

||||

MIT

|

||||

|

||||

# Author

|

||||

|

||||

Yasuhiro Matsumoto (a.k.a mattn)

|

||||

38

vendor/github.com/mattn/go-colorable/colorable_appengine.go

generated

vendored

Normal file

38

vendor/github.com/mattn/go-colorable/colorable_appengine.go

generated

vendored

Normal file

@ -0,0 +1,38 @@

|

||||

//go:build appengine

|

||||

// +build appengine

|

||||

|

||||

package colorable

|

||||

|

||||

import (

|

||||

"io"

|

||||

"os"

|

||||

|

||||

_ "github.com/mattn/go-isatty"

|

||||

)

|

||||

|

||||

// NewColorable returns new instance of Writer which handles escape sequence.

|

||||

func NewColorable(file *os.File) io.Writer {

|

||||

if file == nil {

|

||||

panic("nil passed instead of *os.File to NewColorable()")

|

||||

}

|

||||

|

||||

return file

|

||||

}

|

||||

|

||||

// NewColorableStdout returns new instance of Writer which handles escape sequence for stdout.

|

||||

func NewColorableStdout() io.Writer {

|

||||

return os.Stdout

|

||||

}

|

||||

|

||||

// NewColorableStderr returns new instance of Writer which handles escape sequence for stderr.

|

||||

func NewColorableStderr() io.Writer {

|

||||

return os.Stderr

|

||||

}

|

||||

|

||||

// EnableColorsStdout enable colors if possible.

|

||||

func EnableColorsStdout(enabled *bool) func() {

|

||||

if enabled != nil {

|

||||

*enabled = true

|

||||

}

|

||||

return func() {}

|

||||

}

|

||||

38

vendor/github.com/mattn/go-colorable/colorable_others.go

generated

vendored

Normal file

38

vendor/github.com/mattn/go-colorable/colorable_others.go

generated

vendored

Normal file

@ -0,0 +1,38 @@

|

||||

//go:build !windows && !appengine

|

||||

// +build !windows,!appengine

|

||||

|

||||

package colorable

|

||||

|

||||

import (

|

||||

"io"

|

||||

"os"

|

||||

|

||||

_ "github.com/mattn/go-isatty"

|

||||

)

|

||||

|

||||

// NewColorable returns new instance of Writer which handles escape sequence.

|

||||

func NewColorable(file *os.File) io.Writer {

|

||||

if file == nil {

|

||||

panic("nil passed instead of *os.File to NewColorable()")

|

||||

}

|

||||

|

||||

return file

|

||||

}

|

||||

|

||||

// NewColorableStdout returns new instance of Writer which handles escape sequence for stdout.

|

||||

func NewColorableStdout() io.Writer {

|

||||

return os.Stdout

|

||||

}

|

||||

|

||||

// NewColorableStderr returns new instance of Writer which handles escape sequence for stderr.

|

||||

func NewColorableStderr() io.Writer {

|

||||

return os.Stderr

|

||||

}

|

||||

|

||||

// EnableColorsStdout enable colors if possible.

|

||||

func EnableColorsStdout(enabled *bool) func() {

|

||||

if enabled != nil {

|

||||

*enabled = true

|

||||

}

|

||||

return func() {}

|

||||

}

|

||||

1047

vendor/github.com/mattn/go-colorable/colorable_windows.go

generated

vendored

Normal file

1047

vendor/github.com/mattn/go-colorable/colorable_windows.go

generated

vendored

Normal file

File diff suppressed because it is too large

Load Diff

12

vendor/github.com/mattn/go-colorable/go.test.sh

generated

vendored

Normal file

12

vendor/github.com/mattn/go-colorable/go.test.sh

generated

vendored

Normal file

@ -0,0 +1,12 @@

|

||||

#!/usr/bin/env bash

|

||||

|

||||

set -e

|

||||

echo "" > coverage.txt

|

||||

|

||||

for d in $(go list ./... | grep -v vendor); do

|

||||

go test -race -coverprofile=profile.out -covermode=atomic "$d"

|

||||

if [ -f profile.out ]; then

|

||||

cat profile.out >> coverage.txt

|

||||

rm profile.out

|

||||

fi

|

||||

done

|

||||

57

vendor/github.com/mattn/go-colorable/noncolorable.go

generated

vendored

Normal file

57

vendor/github.com/mattn/go-colorable/noncolorable.go

generated

vendored

Normal file

@ -0,0 +1,57 @@

|

||||

package colorable

|

||||

|

||||

import (

|

||||

"bytes"

|

||||

"io"

|

||||

)

|

||||

|

||||

// NonColorable holds writer but removes escape sequence.

|

||||

type NonColorable struct {

|

||||

out io.Writer

|

||||

}

|

||||

|

||||

// NewNonColorable returns new instance of Writer which removes escape sequence from Writer.

|

||||

func NewNonColorable(w io.Writer) io.Writer {

|

||||

return &NonColorable{out: w}

|

||||

}

|

||||

|

||||

// Write writes data on console

|

||||

func (w *NonColorable) Write(data []byte) (n int, err error) {

|

||||

er := bytes.NewReader(data)

|

||||

var plaintext bytes.Buffer

|

||||

loop:

|

||||

for {

|

||||

c1, err := er.ReadByte()

|

||||

if err != nil {

|

||||

plaintext.WriteTo(w.out)

|

||||

break loop

|

||||

}

|

||||

if c1 != 0x1b {

|

||||

plaintext.WriteByte(c1)

|

||||

continue

|

||||

}

|

||||

_, err = plaintext.WriteTo(w.out)

|

||||

if err != nil {

|

||||

break loop

|

||||

}

|

||||

c2, err := er.ReadByte()

|

||||

if err != nil {

|

||||

break loop

|

||||

}

|

||||

if c2 != 0x5b {

|

||||

continue

|

||||

}

|

||||

|

||||

for {

|

||||

c, err := er.ReadByte()

|

||||

if err != nil {

|

||||

break loop

|

||||

}

|

||||

if ('a' <= c && c <= 'z') || ('A' <= c && c <= 'Z') || c == '@' {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

return len(data), nil

|

||||

}

|

||||

9

vendor/github.com/mattn/go-isatty/LICENSE

generated

vendored

Normal file

9

vendor/github.com/mattn/go-isatty/LICENSE

generated

vendored

Normal file

@ -0,0 +1,9 @@

|

||||

Copyright (c) Yasuhiro MATSUMOTO <mattn.jp@gmail.com>

|

||||

|

||||

MIT License (Expat)

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy of this software and associated documentation files (the "Software"), to deal in the Software without restriction, including without limitation the rights to use, copy, modify, merge, publish, distribute, sublicense, and/or sell copies of the Software, and to permit persons to whom the Software is furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE SOFTWARE.

|

||||

50

vendor/github.com/mattn/go-isatty/README.md

generated

vendored

Normal file

50

vendor/github.com/mattn/go-isatty/README.md

generated

vendored

Normal file

@ -0,0 +1,50 @@

|

||||

# go-isatty

|

||||

|

||||

[](http://godoc.org/github.com/mattn/go-isatty)

|

||||

[](https://codecov.io/gh/mattn/go-isatty)

|

||||

[](https://coveralls.io/github/mattn/go-isatty?branch=master)

|

||||

[](https://goreportcard.com/report/mattn/go-isatty)

|

||||

|

||||

isatty for golang

|

||||

|

||||

## Usage

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"github.com/mattn/go-isatty"

|

||||

"os"

|

||||

)

|

||||

|

||||

func main() {

|

||||

if isatty.IsTerminal(os.Stdout.Fd()) {

|

||||

fmt.Println("Is Terminal")

|

||||

} else if isatty.IsCygwinTerminal(os.Stdout.Fd()) {

|

||||

fmt.Println("Is Cygwin/MSYS2 Terminal")

|

||||

} else {

|

||||

fmt.Println("Is Not Terminal")

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

## Installation

|

||||

|

||||

```

|

||||

$ go get github.com/mattn/go-isatty

|

||||

```

|

||||

|

||||

## License

|

||||

|

||||

MIT

|

||||

|

||||

## Author

|

||||

|

||||

Yasuhiro Matsumoto (a.k.a mattn)

|

||||

|

||||

## Thanks

|

||||

|

||||

* k-takata: base idea for IsCygwinTerminal

|

||||

|

||||

https://github.com/k-takata/go-iscygpty

|

||||

2

vendor/github.com/mattn/go-isatty/doc.go

generated

vendored

Normal file

2

vendor/github.com/mattn/go-isatty/doc.go

generated

vendored

Normal file

@ -0,0 +1,2 @@

|

||||

// Package isatty implements interface to isatty

|

||||

package isatty

|

||||

12

vendor/github.com/mattn/go-isatty/go.test.sh

generated

vendored

Normal file

12

vendor/github.com/mattn/go-isatty/go.test.sh

generated

vendored

Normal file

@ -0,0 +1,12 @@

|

||||

#!/usr/bin/env bash

|

||||

|

||||

set -e

|

||||

echo "" > coverage.txt

|

||||

|

||||

for d in $(go list ./... | grep -v vendor); do

|

||||

go test -race -coverprofile=profile.out -covermode=atomic "$d"

|

||||

if [ -f profile.out ]; then

|

||||

cat profile.out >> coverage.txt

|

||||

rm profile.out

|

||||

fi

|

||||

done

|

||||

20

vendor/github.com/mattn/go-isatty/isatty_bsd.go

generated

vendored

Normal file

20

vendor/github.com/mattn/go-isatty/isatty_bsd.go

generated

vendored

Normal file

@ -0,0 +1,20 @@

|

||||

//go:build (darwin || freebsd || openbsd || netbsd || dragonfly || hurd) && !appengine && !tinygo

|

||||

// +build darwin freebsd openbsd netbsd dragonfly hurd

|

||||

// +build !appengine

|

||||

// +build !tinygo

|

||||

|

||||

package isatty

|

||||

|

||||

import "golang.org/x/sys/unix"

|

||||

|

||||

// IsTerminal return true if the file descriptor is terminal.

|

||||

func IsTerminal(fd uintptr) bool {

|

||||

_, err := unix.IoctlGetTermios(int(fd), unix.TIOCGETA)

|

||||

return err == nil

|

||||

}

|

||||

|

||||

// IsCygwinTerminal return true if the file descriptor is a cygwin or msys2

|

||||

// terminal. This is also always false on this environment.

|

||||

func IsCygwinTerminal(fd uintptr) bool {

|

||||

return false

|

||||

}

|

||||

17

vendor/github.com/mattn/go-isatty/isatty_others.go

generated

vendored

Normal file

17

vendor/github.com/mattn/go-isatty/isatty_others.go

generated

vendored

Normal file

@ -0,0 +1,17 @@

|

||||

//go:build (appengine || js || nacl || tinygo || wasm) && !windows

|

||||

// +build appengine js nacl tinygo wasm

|

||||

// +build !windows

|

||||

|

||||

package isatty

|

||||

|

||||

// IsTerminal returns true if the file descriptor is terminal which

|

||||

// is always false on js and appengine classic which is a sandboxed PaaS.

|

||||

func IsTerminal(fd uintptr) bool {

|

||||

return false

|

||||

}

|

||||

|

||||

// IsCygwinTerminal() return true if the file descriptor is a cygwin or msys2

|

||||

// terminal. This is also always false on this environment.

|

||||

func IsCygwinTerminal(fd uintptr) bool {

|

||||

return false

|

||||

}

|

||||

23

vendor/github.com/mattn/go-isatty/isatty_plan9.go

generated

vendored

Normal file

23

vendor/github.com/mattn/go-isatty/isatty_plan9.go

generated

vendored

Normal file

@ -0,0 +1,23 @@

|

||||

//go:build plan9

|

||||

// +build plan9

|

||||

|

||||

package isatty

|

||||

|

||||

import (

|

||||

"syscall"

|

||||

)

|

||||

|

||||

// IsTerminal returns true if the given file descriptor is a terminal.

|

||||

func IsTerminal(fd uintptr) bool {

|

||||

path, err := syscall.Fd2path(int(fd))

|

||||

if err != nil {

|

||||

return false

|

||||

}

|

||||

return path == "/dev/cons" || path == "/mnt/term/dev/cons"

|

||||

}

|

||||

|

||||

// IsCygwinTerminal return true if the file descriptor is a cygwin or msys2

|

||||

// terminal. This is also always false on this environment.

|

||||

func IsCygwinTerminal(fd uintptr) bool {

|

||||

return false

|

||||

}

|

||||

21

vendor/github.com/mattn/go-isatty/isatty_solaris.go

generated

vendored

Normal file

21

vendor/github.com/mattn/go-isatty/isatty_solaris.go

generated

vendored

Normal file

@ -0,0 +1,21 @@

|

||||

//go:build solaris && !appengine

|

||||

// +build solaris,!appengine

|

||||

|

||||

package isatty

|

||||

|

||||

import (

|

||||

"golang.org/x/sys/unix"

|

||||

)

|

||||

|

||||

// IsTerminal returns true if the given file descriptor is a terminal.

|

||||

// see: https://src.illumos.org/source/xref/illumos-gate/usr/src/lib/libc/port/gen/isatty.c

|

||||

func IsTerminal(fd uintptr) bool {

|

||||

_, err := unix.IoctlGetTermio(int(fd), unix.TCGETA)

|

||||

return err == nil

|

||||

}

|

||||

|

||||

// IsCygwinTerminal return true if the file descriptor is a cygwin or msys2

|

||||

// terminal. This is also always false on this environment.

|

||||

func IsCygwinTerminal(fd uintptr) bool {

|

||||

return false

|

||||

}

|

||||

20

vendor/github.com/mattn/go-isatty/isatty_tcgets.go

generated

vendored

Normal file

20

vendor/github.com/mattn/go-isatty/isatty_tcgets.go

generated

vendored

Normal file

@ -0,0 +1,20 @@

|

||||

//go:build (linux || aix || zos) && !appengine && !tinygo

|

||||

// +build linux aix zos

|

||||

// +build !appengine

|

||||

// +build !tinygo

|

||||

|

||||

package isatty

|

||||

|

||||

import "golang.org/x/sys/unix"

|

||||

|

||||

// IsTerminal return true if the file descriptor is terminal.

|

||||

func IsTerminal(fd uintptr) bool {

|

||||

_, err := unix.IoctlGetTermios(int(fd), unix.TCGETS)

|

||||

return err == nil

|

||||

}

|

||||

|

||||

// IsCygwinTerminal return true if the file descriptor is a cygwin or msys2

|

||||

// terminal. This is also always false on this environment.

|

||||

func IsCygwinTerminal(fd uintptr) bool {

|

||||

return false

|

||||

}

|

||||

125

vendor/github.com/mattn/go-isatty/isatty_windows.go

generated

vendored

Normal file

125

vendor/github.com/mattn/go-isatty/isatty_windows.go

generated

vendored

Normal file

@ -0,0 +1,125 @@

|

||||

//go:build windows && !appengine

|

||||

// +build windows,!appengine

|

||||

|

||||

package isatty

|

||||

|

||||

import (

|

||||

"errors"

|

||||

"strings"

|

||||

"syscall"

|

||||

"unicode/utf16"

|

||||

"unsafe"

|

||||

)

|

||||

|

||||

const (

|

||||

objectNameInfo uintptr = 1

|

||||

fileNameInfo = 2

|

||||

fileTypePipe = 3

|

||||

)

|

||||

|

||||

var (

|

||||

kernel32 = syscall.NewLazyDLL("kernel32.dll")

|

||||

ntdll = syscall.NewLazyDLL("ntdll.dll")

|

||||

procGetConsoleMode = kernel32.NewProc("GetConsoleMode")

|

||||

procGetFileInformationByHandleEx = kernel32.NewProc("GetFileInformationByHandleEx")

|

||||

procGetFileType = kernel32.NewProc("GetFileType")

|

||||

procNtQueryObject = ntdll.NewProc("NtQueryObject")

|

||||

)

|

||||

|

||||

func init() {

|

||||

// Check if GetFileInformationByHandleEx is available.

|

||||

if procGetFileInformationByHandleEx.Find() != nil {

|

||||

procGetFileInformationByHandleEx = nil

|

||||

}

|

||||

}

|

||||

|

||||

// IsTerminal return true if the file descriptor is terminal.

|

||||

func IsTerminal(fd uintptr) bool {

|

||||

var st uint32

|

||||

r, _, e := syscall.Syscall(procGetConsoleMode.Addr(), 2, fd, uintptr(unsafe.Pointer(&st)), 0)

|

||||

return r != 0 && e == 0

|

||||

}

|

||||

|

||||

// Check pipe name is used for cygwin/msys2 pty.

|

||||

// Cygwin/MSYS2 PTY has a name like:

|

||||

// \{cygwin,msys}-XXXXXXXXXXXXXXXX-ptyN-{from,to}-master

|

||||

func isCygwinPipeName(name string) bool {

|

||||

token := strings.Split(name, "-")

|

||||

if len(token) < 5 {

|

||||

return false

|

||||

}

|

||||

|

||||

if token[0] != `\msys` &&

|

||||

token[0] != `\cygwin` &&

|

||||

token[0] != `\Device\NamedPipe\msys` &&

|

||||

token[0] != `\Device\NamedPipe\cygwin` {

|

||||

return false

|

||||

}

|

||||

|

||||

if token[1] == "" {

|

||||

return false

|

||||

}

|

||||

|

||||

if !strings.HasPrefix(token[2], "pty") {

|

||||

return false

|

||||

}

|

||||

|

||||

if token[3] != `from` && token[3] != `to` {

|

||||

return false

|

||||

}

|

||||

|

||||

if token[4] != "master" {

|

||||

return false

|

||||

}

|

||||

|

||||

return true

|

||||

}

|

||||

|

||||

// getFileNameByHandle use the undocomented ntdll NtQueryObject to get file full name from file handler

|

||||

// since GetFileInformationByHandleEx is not available under windows Vista and still some old fashion

|

||||

// guys are using Windows XP, this is a workaround for those guys, it will also work on system from

|

||||

// Windows vista to 10

|

||||

// see https://stackoverflow.com/a/18792477 for details

|

||||

func getFileNameByHandle(fd uintptr) (string, error) {

|

||||

if procNtQueryObject == nil {

|

||||

return "", errors.New("ntdll.dll: NtQueryObject not supported")

|

||||

}

|

||||

|

||||

var buf [4 + syscall.MAX_PATH]uint16

|

||||

var result int

|

||||

r, _, e := syscall.Syscall6(procNtQueryObject.Addr(), 5,

|

||||

fd, objectNameInfo, uintptr(unsafe.Pointer(&buf)), uintptr(2*len(buf)), uintptr(unsafe.Pointer(&result)), 0)

|

||||

if r != 0 {

|

||||

return "", e

|

||||

}

|

||||

return string(utf16.Decode(buf[4 : 4+buf[0]/2])), nil

|

||||

}

|

||||

|

||||

// IsCygwinTerminal() return true if the file descriptor is a cygwin or msys2

|

||||

// terminal.

|

||||

func IsCygwinTerminal(fd uintptr) bool {

|

||||

if procGetFileInformationByHandleEx == nil {

|

||||

name, err := getFileNameByHandle(fd)

|

||||

if err != nil {

|

||||

return false

|

||||

}

|

||||

return isCygwinPipeName(name)

|

||||

}

|

||||

|

||||

// Cygwin/msys's pty is a pipe.

|

||||

ft, _, e := syscall.Syscall(procGetFileType.Addr(), 1, fd, 0, 0)

|

||||

if ft != fileTypePipe || e != 0 {

|

||||

return false

|

||||

}

|

||||

|

||||

var buf [2 + syscall.MAX_PATH]uint16

|

||||

r, _, e := syscall.Syscall6(procGetFileInformationByHandleEx.Addr(),

|

||||

4, fd, fileNameInfo, uintptr(unsafe.Pointer(&buf)),

|

||||

uintptr(len(buf)*2), 0, 0)

|

||||

if r == 0 || e != 0 {

|

||||

return false

|

||||

}

|

||||

|

||||

l := *(*uint32)(unsafe.Pointer(&buf))

|

||||

return isCygwinPipeName(string(utf16.Decode(buf[2 : 2+l/2])))

|

||||

}

|

||||

25

vendor/github.com/rs/zerolog/.gitignore

generated

vendored

Normal file

25

vendor/github.com/rs/zerolog/.gitignore

generated

vendored

Normal file

@ -0,0 +1,25 @@

|

||||

# Compiled Object files, Static and Dynamic libs (Shared Objects)

|

||||

*.o

|

||||

*.a

|

||||

*.so

|

||||

|

||||

# Folders

|

||||

_obj

|

||||

_test

|

||||

tmp

|

||||

|

||||

# Architecture specific extensions/prefixes

|

||||

*.[568vq]

|

||||

[568vq].out

|

||||

|

||||

*.cgo1.go

|

||||

*.cgo2.c

|

||||

_cgo_defun.c

|

||||

_cgo_gotypes.go

|

||||

_cgo_export.*

|

||||

|

||||

_testmain.go

|

||||

|

||||

*.exe

|

||||

*.test

|

||||

*.prof

|

||||

1

vendor/github.com/rs/zerolog/CNAME

generated

vendored

Normal file

1

vendor/github.com/rs/zerolog/CNAME

generated

vendored

Normal file

@ -0,0 +1 @@

|

||||

zerolog.io

|

||||

21

vendor/github.com/rs/zerolog/LICENSE

generated

vendored

Normal file

21

vendor/github.com/rs/zerolog/LICENSE

generated

vendored

Normal file

@ -0,0 +1,21 @@

|

||||

MIT License

|

||||

|

||||

Copyright (c) 2017 Olivier Poitrey

|

||||

|

||||

Permission is hereby granted, free of charge, to any person obtaining a copy

|

||||

of this software and associated documentation files (the "Software"), to deal

|

||||

in the Software without restriction, including without limitation the rights

|

||||

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

copies of the Software, and to permit persons to whom the Software is

|

||||

furnished to do so, subject to the following conditions:

|

||||

|

||||

The above copyright notice and this permission notice shall be included in all

|

||||

copies or substantial portions of the Software.

|

||||

|

||||

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

||||

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

||||

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

||||

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

||||

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

||||

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

||||

SOFTWARE.

|

||||

786

vendor/github.com/rs/zerolog/README.md

generated

vendored

Normal file

786

vendor/github.com/rs/zerolog/README.md

generated

vendored

Normal file

@ -0,0 +1,786 @@

|

||||

# Zero Allocation JSON Logger

|

||||

|

||||

[](https://godoc.org/github.com/rs/zerolog) [](https://raw.githubusercontent.com/rs/zerolog/master/LICENSE) [](https://github.com/rs/zerolog/actions/workflows/test.yml) [](https://raw.githack.com/wiki/rs/zerolog/coverage.html)

|

||||

|

||||

The zerolog package provides a fast and simple logger dedicated to JSON output.

|

||||

|

||||

Zerolog's API is designed to provide both a great developer experience and stunning [performance](#benchmarks). Its unique chaining API allows zerolog to write JSON (or CBOR) log events by avoiding allocations and reflection.

|

||||

|

||||

Uber's [zap](https://godoc.org/go.uber.org/zap) library pioneered this approach. Zerolog is taking this concept to the next level with a simpler to use API and even better performance.

|

||||

|

||||

To keep the code base and the API simple, zerolog focuses on efficient structured logging only. Pretty logging on the console is made possible using the provided (but inefficient) [`zerolog.ConsoleWriter`](#pretty-logging).

|

||||

|

||||

|

||||

|

||||

## Who uses zerolog

|

||||

|

||||

Find out [who uses zerolog](https://github.com/rs/zerolog/wiki/Who-uses-zerolog) and add your company / project to the list.

|

||||

|

||||

## Features

|

||||

|

||||

* [Blazing fast](#benchmarks)

|

||||

* [Low to zero allocation](#benchmarks)

|

||||

* [Leveled logging](#leveled-logging)

|

||||

* [Sampling](#log-sampling)

|

||||

* [Hooks](#hooks)

|

||||

* [Contextual fields](#contextual-logging)

|

||||

* [`context.Context` integration](#contextcontext-integration)

|

||||

* [Integration with `net/http`](#integration-with-nethttp)

|

||||

* [JSON and CBOR encoding formats](#binary-encoding)

|

||||

* [Pretty logging for development](#pretty-logging)

|

||||

* [Error Logging (with optional Stacktrace)](#error-logging)

|

||||

|

||||

## Installation

|

||||

|

||||

```bash

|

||||

go get -u github.com/rs/zerolog/log

|

||||

```

|

||||

|

||||

## Getting Started

|

||||

|

||||

### Simple Logging Example

|

||||

|

||||

For simple logging, import the global logger package **github.com/rs/zerolog/log**

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"github.com/rs/zerolog"

|

||||

"github.com/rs/zerolog/log"

|

||||

)

|

||||

|

||||

func main() {

|

||||

// UNIX Time is faster and smaller than most timestamps

|

||||

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

|

||||

|

||||

log.Print("hello world")

|

||||

}

|

||||

|

||||

// Output: {"time":1516134303,"level":"debug","message":"hello world"}

|

||||

```

|

||||

> Note: By default log writes to `os.Stderr`

|

||||

> Note: The default log level for `log.Print` is *debug*

|

||||

|

||||

### Contextual Logging

|

||||

|

||||

**zerolog** allows data to be added to log messages in the form of key:value pairs. The data added to the message adds "context" about the log event that can be critical for debugging as well as myriad other purposes. An example of this is below:

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"github.com/rs/zerolog"

|

||||

"github.com/rs/zerolog/log"

|

||||

)

|

||||

|

||||

func main() {

|

||||

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

|

||||

|

||||

log.Debug().

|

||||

Str("Scale", "833 cents").

|

||||

Float64("Interval", 833.09).

|

||||

Msg("Fibonacci is everywhere")

|

||||

|

||||

log.Debug().

|

||||

Str("Name", "Tom").

|

||||

Send()

|

||||

}

|

||||

|

||||

// Output: {"level":"debug","Scale":"833 cents","Interval":833.09,"time":1562212768,"message":"Fibonacci is everywhere"}

|

||||

// Output: {"level":"debug","Name":"Tom","time":1562212768}

|

||||

```

|

||||

|

||||

> You'll note in the above example that when adding contextual fields, the fields are strongly typed. You can find the full list of supported fields [here](#standard-types)

|

||||

|

||||

### Leveled Logging

|

||||

|

||||

#### Simple Leveled Logging Example

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"github.com/rs/zerolog"

|

||||

"github.com/rs/zerolog/log"

|

||||

)

|

||||

|

||||

func main() {

|

||||

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

|

||||

|

||||

log.Info().Msg("hello world")

|

||||

}

|

||||

|

||||

// Output: {"time":1516134303,"level":"info","message":"hello world"}

|

||||

```

|

||||

|

||||

> It is very important to note that when using the **zerolog** chaining API, as shown above (`log.Info().Msg("hello world"`), the chain must have either the `Msg` or `Msgf` method call. If you forget to add either of these, the log will not occur and there is no compile time error to alert you of this.

|

||||

|

||||

**zerolog** allows for logging at the following levels (from highest to lowest):

|

||||

|

||||

* panic (`zerolog.PanicLevel`, 5)

|

||||

* fatal (`zerolog.FatalLevel`, 4)

|

||||

* error (`zerolog.ErrorLevel`, 3)

|

||||

* warn (`zerolog.WarnLevel`, 2)

|

||||

* info (`zerolog.InfoLevel`, 1)

|

||||

* debug (`zerolog.DebugLevel`, 0)

|

||||

* trace (`zerolog.TraceLevel`, -1)

|

||||

|

||||

You can set the Global logging level to any of these options using the `SetGlobalLevel` function in the zerolog package, passing in one of the given constants above, e.g. `zerolog.InfoLevel` would be the "info" level. Whichever level is chosen, all logs with a level greater than or equal to that level will be written. To turn off logging entirely, pass the `zerolog.Disabled` constant.

|

||||

|

||||

#### Setting Global Log Level

|

||||

|

||||

This example uses command-line flags to demonstrate various outputs depending on the chosen log level.

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"flag"

|

||||

|

||||

"github.com/rs/zerolog"

|

||||

"github.com/rs/zerolog/log"

|

||||

)

|

||||

|

||||

func main() {

|

||||

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

|

||||

debug := flag.Bool("debug", false, "sets log level to debug")

|

||||

|

||||

flag.Parse()

|

||||

|

||||

// Default level for this example is info, unless debug flag is present

|

||||

zerolog.SetGlobalLevel(zerolog.InfoLevel)

|

||||

if *debug {

|

||||

zerolog.SetGlobalLevel(zerolog.DebugLevel)

|

||||

}

|

||||

|

||||

log.Debug().Msg("This message appears only when log level set to Debug")

|

||||

log.Info().Msg("This message appears when log level set to Debug or Info")

|

||||

|

||||

if e := log.Debug(); e.Enabled() {

|

||||

// Compute log output only if enabled.

|

||||

value := "bar"

|

||||

e.Str("foo", value).Msg("some debug message")

|

||||

}

|

||||

}

|

||||

```

|

||||

|

||||

Info Output (no flag)

|

||||

|

||||

```bash

|

||||

$ ./logLevelExample

|

||||

{"time":1516387492,"level":"info","message":"This message appears when log level set to Debug or Info"}

|

||||

```

|

||||

|

||||

Debug Output (debug flag set)

|

||||

|

||||

```bash

|

||||

$ ./logLevelExample -debug

|

||||

{"time":1516387573,"level":"debug","message":"This message appears only when log level set to Debug"}

|

||||

{"time":1516387573,"level":"info","message":"This message appears when log level set to Debug or Info"}

|

||||

{"time":1516387573,"level":"debug","foo":"bar","message":"some debug message"}

|

||||

```

|

||||

|

||||

#### Logging without Level or Message

|

||||

|

||||

You may choose to log without a specific level by using the `Log` method. You may also write without a message by setting an empty string in the `msg string` parameter of the `Msg` method. Both are demonstrated in the example below.

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"github.com/rs/zerolog"

|

||||

"github.com/rs/zerolog/log"

|

||||

)

|

||||

|

||||

func main() {

|

||||

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

|

||||

|

||||

log.Log().

|

||||

Str("foo", "bar").

|

||||

Msg("")

|

||||

}

|

||||

|

||||

// Output: {"time":1494567715,"foo":"bar"}

|

||||

```

|

||||

|

||||

### Error Logging

|

||||

|

||||

You can log errors using the `Err` method

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"errors"

|

||||

|

||||

"github.com/rs/zerolog"

|

||||

"github.com/rs/zerolog/log"

|

||||

)

|

||||

|

||||

func main() {

|

||||

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

|

||||

|

||||

err := errors.New("seems we have an error here")

|

||||

log.Error().Err(err).Msg("")

|

||||

}

|

||||

|

||||

// Output: {"level":"error","error":"seems we have an error here","time":1609085256}

|

||||

```

|

||||

|

||||

> The default field name for errors is `error`, you can change this by setting `zerolog.ErrorFieldName` to meet your needs.

|

||||

|

||||

#### Error Logging with Stacktrace

|

||||

|

||||

Using `github.com/pkg/errors`, you can add a formatted stacktrace to your errors.

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"github.com/pkg/errors"

|

||||

"github.com/rs/zerolog/pkgerrors"

|

||||

|

||||

"github.com/rs/zerolog"

|

||||

"github.com/rs/zerolog/log"

|

||||

)

|

||||

|

||||

func main() {

|

||||

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

|

||||

zerolog.ErrorStackMarshaler = pkgerrors.MarshalStack

|

||||

|

||||

err := outer()

|

||||

log.Error().Stack().Err(err).Msg("")

|

||||

}

|

||||

|

||||

func inner() error {

|

||||

return errors.New("seems we have an error here")

|

||||

}

|

||||

|

||||

func middle() error {

|

||||

err := inner()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func outer() error {

|

||||

err := middle()

|

||||

if err != nil {

|

||||

return err

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

// Output: {"level":"error","stack":[{"func":"inner","line":"20","source":"errors.go"},{"func":"middle","line":"24","source":"errors.go"},{"func":"outer","line":"32","source":"errors.go"},{"func":"main","line":"15","source":"errors.go"},{"func":"main","line":"204","source":"proc.go"},{"func":"goexit","line":"1374","source":"asm_amd64.s"}],"error":"seems we have an error here","time":1609086683}

|

||||

```

|

||||

|

||||

> zerolog.ErrorStackMarshaler must be set in order for the stack to output anything.

|

||||

|

||||

#### Logging Fatal Messages

|

||||

|

||||

```go

|

||||

package main

|

||||

|

||||

import (

|

||||

"errors"

|

||||

|

||||

"github.com/rs/zerolog"

|

||||

"github.com/rs/zerolog/log"

|

||||

)

|

||||

|

||||

func main() {

|

||||

err := errors.New("A repo man spends his life getting into tense situations")

|

||||

service := "myservice"

|

||||

|

||||

zerolog.TimeFieldFormat = zerolog.TimeFormatUnix

|

||||

|

||||

log.Fatal().

|

||||

Err(err).

|

||||

Str("service", service).

|

||||

Msgf("Cannot start %s", service)

|

||||

}

|

||||

|

||||

// Output: {"time":1516133263,"level":"fatal","error":"A repo man spends his life getting into tense situations","service":"myservice","message":"Cannot start myservice"}

|

||||

// exit status 1

|

||||

```

|

||||

|

||||

> NOTE: Using `Msgf` generates one allocation even when the logger is disabled.

|

||||

|

||||

|

||||

### Create logger instance to manage different outputs

|

||||

|

||||

```go

|

||||

logger := zerolog.New(os.Stderr).With().Timestamp().Logger()

|

||||

|

||||

logger.Info().Str("foo", "bar").Msg("hello world")

|

||||

|

||||

// Output: {"level":"info","time":1494567715,"message":"hello world","foo":"bar"}

|

||||

```

|

||||

|

||||

### Sub-loggers let you chain loggers with additional context

|

||||

|

||||

```go

|

||||

sublogger := log.With().

|

||||

Str("component", "foo").

|

||||

Logger()

|

||||

sublogger.Info().Msg("hello world")

|

||||

|

||||

// Output: {"level":"info","time":1494567715,"message":"hello world","component":"foo"}

|

||||

```

|

||||

|

||||

### Pretty logging

|

||||

|

||||

To log a human-friendly, colorized output, use `zerolog.ConsoleWriter`:

|

||||

|

||||

```go

|

||||

log.Logger = log.Output(zerolog.ConsoleWriter{Out: os.Stderr})

|

||||

|

||||

log.Info().Str("foo", "bar").Msg("Hello world")

|

||||

|

||||

// Output: 3:04PM INF Hello World foo=bar

|

||||

```

|

||||

|

||||

To customize the configuration and formatting:

|

||||

|

||||

```go

|

||||

output := zerolog.ConsoleWriter{Out: os.Stdout, TimeFormat: time.RFC3339}

|

||||

output.FormatLevel = func(i interface{}) string {

|

||||

return strings.ToUpper(fmt.Sprintf("| %-6s|", i))

|

||||

}

|

||||

output.FormatMessage = func(i interface{}) string {

|

||||

return fmt.Sprintf("***%s****", i)

|

||||

}

|

||||

output.FormatFieldName = func(i interface{}) string {

|

||||

return fmt.Sprintf("%s:", i)

|

||||

}

|

||||

output.FormatFieldValue = func(i interface{}) string {

|

||||

return strings.ToUpper(fmt.Sprintf("%s", i))

|

||||

}

|

||||

|

||||

log := zerolog.New(output).With().Timestamp().Logger()

|

||||

|

||||

log.Info().Str("foo", "bar").Msg("Hello World")

|

||||

|

||||

// Output: 2006-01-02T15:04:05Z07:00 | INFO | ***Hello World**** foo:BAR

|

||||

```

|

||||

|

||||

### Sub dictionary

|

||||

|

||||

```go

|

||||

log.Info().

|

||||

Str("foo", "bar").

|

||||

Dict("dict", zerolog.Dict().

|

||||

Str("bar", "baz").

|

||||

Int("n", 1),

|

||||

).Msg("hello world")

|

||||

|

||||

// Output: {"level":"info","time":1494567715,"foo":"bar","dict":{"bar":"baz","n":1},"message":"hello world"}

|

||||

```

|

||||

|

||||

### Customize automatic field names

|

||||

|

||||

```go

|

||||

zerolog.TimestampFieldName = "t"

|

||||

zerolog.LevelFieldName = "l"

|

||||

zerolog.MessageFieldName = "m"

|

||||

|

||||

log.Info().Msg("hello world")

|

||||

|

||||

// Output: {"l":"info","t":1494567715,"m":"hello world"}

|

||||

```

|

||||

|

||||

### Add contextual fields to the global logger

|

||||

|

||||

```go

|

||||

log.Logger = log.With().Str("foo", "bar").Logger()

|

||||

```

|

||||

|

||||

### Add file and line number to log

|

||||

|

||||

Equivalent of `Llongfile`:

|

||||

|

||||

```go

|

||||

log.Logger = log.With().Caller().Logger()

|

||||

log.Info().Msg("hello world")

|

||||

|

||||

// Output: {"level": "info", "message": "hello world", "caller": "/go/src/your_project/some_file:21"}

|

||||

```

|

||||

|

||||

Equivalent of `Lshortfile`:

|

||||

|

||||

```go

|

||||

zerolog.CallerMarshalFunc = func(pc uintptr, file string, line int) string {

|

||||

short := file

|

||||

for i := len(file) - 1; i > 0; i-- {

|

||||

if file[i] == '/' {

|

||||

short = file[i+1:]

|

||||

break

|

||||

}

|

||||

}

|

||||

file = short

|

||||

return file + ":" + strconv.Itoa(line)

|

||||

}

|

||||

log.Logger = log.With().Caller().Logger()

|

||||

log.Info().Msg("hello world")

|

||||

|

||||

// Output: {"level": "info", "message": "hello world", "caller": "some_file:21"}

|

||||

```

|

||||

|

||||

### Thread-safe, lock-free, non-blocking writer

|

||||

|

||||

If your writer might be slow or not thread-safe and you need your log producers to never get slowed down by a slow writer, you can use a `diode.Writer` as follows:

|

||||

|

||||

```go

|

||||

wr := diode.NewWriter(os.Stdout, 1000, 10*time.Millisecond, func(missed int) {

|

||||

fmt.Printf("Logger Dropped %d messages", missed)

|

||||

})

|

||||

log := zerolog.New(wr)

|

||||

log.Print("test")

|

||||

```

|

||||

|

||||

You will need to install `code.cloudfoundry.org/go-diodes` to use this feature.

|

||||

|

||||

### Log Sampling

|

||||

|

||||

```go

|

||||

sampled := log.Sample(&zerolog.BasicSampler{N: 10})

|

||||

sampled.Info().Msg("will be logged every 10 messages")

|

||||

|

||||

// Output: {"time":1494567715,"level":"info","message":"will be logged every 10 messages"}

|

||||

```

|

||||

|

||||

More advanced sampling:

|

||||

|

||||

```go

|

||||

// Will let 5 debug messages per period of 1 second.

|

||||

// Over 5 debug message, 1 every 100 debug messages are logged.

|

||||